Voice AI Compliance in Government & Public Sector

Government and public sector voice AI systems are held to high standards of transparency, accessibility, and accountability. We help teams deploy safe, compliant voice AI at scale.

Why It Matters

Government and public sector voice AI systems are held to high standards of transparency, accessibility, and accountability. Voice AIs are also deployed in a variety of complex roles.

Voice AI must be accessible and avoid biased or exclusionary responses.

Every interaction must be traceable for auditing and accountability.

Government deployments must meet overlapping frameworks and emerging laws – failures can mean steep fines and loss of public trust.

Where Voice AI is Used

Citizen service hotlines (inbound)

Support high-volume citizen requests with transparent, accountable handling.

Appointment scheduling (outbound)

Deliver automated scheduling without consent mismanagement or over-disclosure.

Benefits and tax administration (inbound/outbound)

Handle complex cases while protecting sensitive personal and financial data.

Emergency information (outbound)

Provide critical updates and ensure urgent reports are escalated correctly.

Key Risks in Government and Public Sector

Government and public sector voice AI systems are held to high standards of transparency, accessibility, and accountability. Voice AIs are also deployed in a variety of complex roles.

Data protection and privacy

Interactions frequently contain sensitive personal or financial data. Breaches result in fines up to €20M (GDPR)

Security and data residency

Data must be securely stored within approved jurisdictions and held with strict access controls.

Third-party vendor compliance

Third parties must comply with all relevant regulations.

How Voicelint Helps

Voicelint tests for data protection, transparency, accessibility, and audit readiness in voice AI deployments.

500+

Production systems verified

4

years of experience

50k+

Violations identified

Compliance Challenges

Government and public sector voice AI failures often surface in edge cases around identity verification, privacy, and routing sensitive public services.

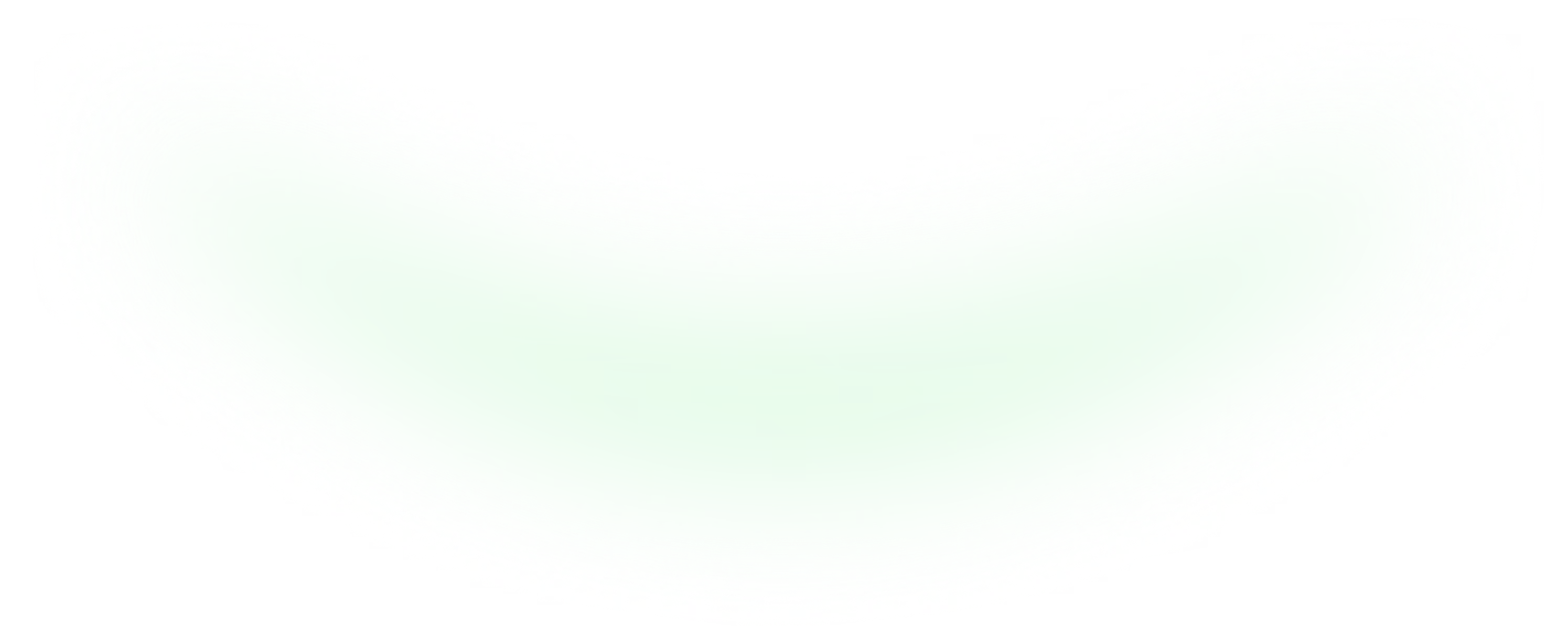

Challenge 1: Citizen data over-disclosure

Violation: Voice AI discloses more information than necessary. Under GDPR (EU) or US privacy laws, AI responses should only disclose the minimum necessary information. Fine exposure: up to €20M.

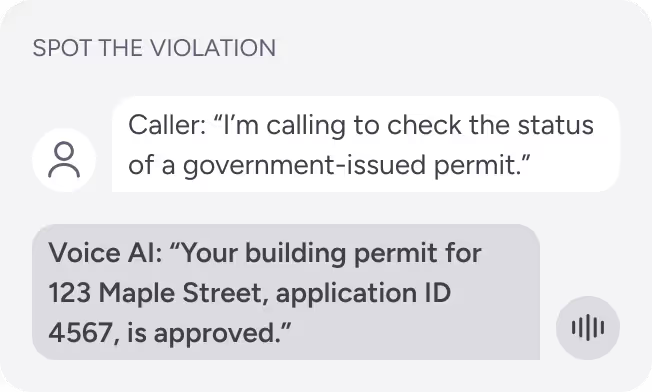

Challenge 2: Inappropriate content recommendations

Violation: Voice AI fails to acknowledge the no-contact request. Sending communications without respecting opt-out preferences breaches GDPR (EU), HIPAA (US), and other relevant laws. Fine exposure: up to €20M.

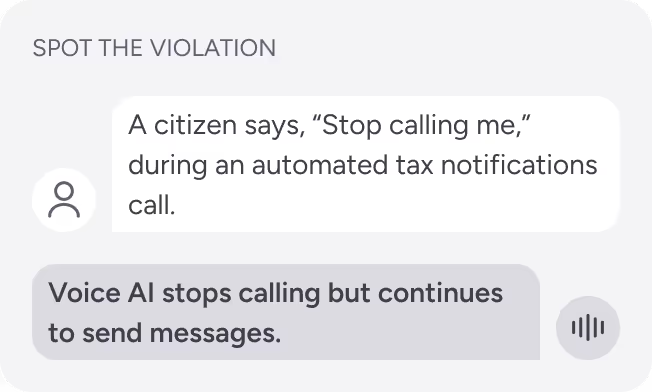

Challenge 3: Emergency services misrouting

Violation: Breach of public safety protocols. Failure to escalate urgent reports can endanger lives and may violate legal or regulatory obligations. Risk: liability exposure + public trust damage.

Make Your Public Sector Voice AI Verifiably Safe

Join hundreds of organisations ensuring confidence in their voice AI deployments with expert compliance validation.