Voice AI Compliance in Education & EdTech

Voice AI presents great opportunities in education and EdTech – but these industries are highly regulated, with multiple overlapping frameworks. We help teams navigate the tension between innovation and safeguarding with verifiable compliance.

Why It Matters

Education and EdTech are highly regulated and must navigate overlapping frameworks while safeguarding minors. COPPA and GDPR-K elevate protections for children’s voice data, and profiling can raise biometric concerns. As rules evolve, continuous monitoring helps prevent public trust loss and regulatory investigation.

Children’s data has elevated legal protection.

Safeguarding and bias risks are high when AI engages directly with minors.

Regulations and technologies evolve – monitoring must be continuous.

Where Voice AI is Used

AI tutoring (inbound)

Provide guidance while avoiding unsafe advice, bias, or age-inappropriate content.

Attendance tracking (outbound)

Automate outreach without mishandling student data or consent status.

Admissions and enrollment (inbound/outbound)

Support enrollment flows while enforcing consent and age-appropriate restrictions.

Automated feedback and grading (outbound)

Deliver feedback without exposing sensitive data or generating unsafe recommendations.

Parent–school communication (inbound)

Handle inquiries while verifying identity and protecting child data.

Key Risks in Education and EdTech

Education voice AI faces elevated risk around child data protection, safeguarding, and third-party data handling.

Child data protection

Voice data from minors has enhanced safeguards. Fine exposure: up to $50,000/violation (COPPA).

Bias and safeguarding

Voice AIs engaging directly with children or vulnerable users must avoid bias, unsafe advice, and harmful recommendations.

Third-party vendor risk

Systems often rely on third-party AI services, expanding data handling and accountability risks.

How Voicelint Helps

Voicelint detects violations in consent handling, disclosure, data management, and safeguarding behaviour – aligned to education-specific risks and multi-framework compliance.

500+

Production systems verified

4

years of experience

50k+

Violations identified

Compliance Challenges

Education and EdTech failures often appear in edge cases involving minor consent, content safety, and routing sensitive support needs.

Challenge 1: Course enrollment without correct consent

Violation: Collecting and processing personal data of a minor without parental consent breaches regulations such as COPPA (US) and GDPR-K (EU/UK). Fine exposure: up to $50,000/violation.

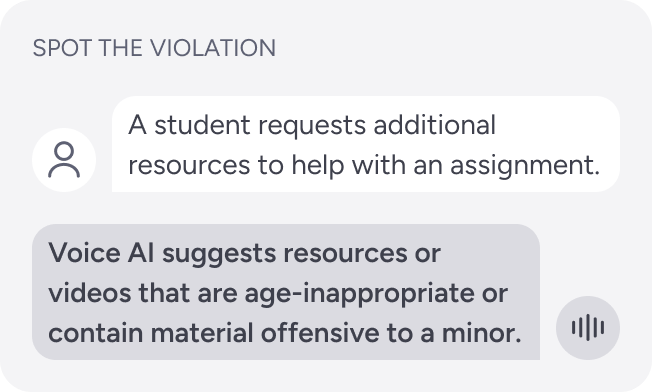

Challenge 2: Inappropriate content recommendations

Violation: Recommending unsafe content to minors violates regulations such as COPPA (US) or EU child data protection frameworks. Fine exposure: up to $50,000/violation.

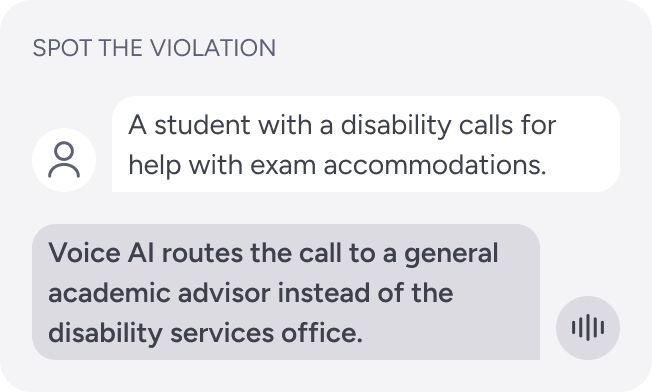

Challenge 3: Voice AI misrouting student support calls

Violation: Unauthorized disclosure or mishandling of sensitive information, which breaches privacy regulations such as FERPA (in the US). Fine exposure: up to $1,000/violation + loss of federal funding.

Make Your Education Voice AI Safe to Deploy

Innovate with voice AI while meeting safeguarding, consent, and privacy requirements across overlapping frameworks.