Voice AI Compliance in Finance

Voice AI in financial services comes with a high level of risk – across security, data privacy, and auditability. We help teams deploy compliant voice AI that can withstand scrutiny across multiple regulatory frameworks.

Why It Matters

Voice AI in finance comes with a high level of risk – especially security and auditability across multiple regulatory frameworks. Financial services must comply with multiple regulatory frameworks, and inbound vs outbound requires separate verification. Companies remain liable for violations, with failures bringing large fines and negative public attention.

Security failures aren’t theoretical – they’re costly.

Inbound and outbound calls need separate compliance verification.

Multi-framework compliance is mandatory, not optional.

Where Voice AI is Used

Account inquiries (inbound)

Handle balance, transactions, and account questions – without exposing sensitive data.

Fraud alerts (outbound)

Contact customers quickly while avoiding impersonation risks and unsafe verification flows.

Loan applications (inbound)

Collect application details and route customers securely through regulated steps.

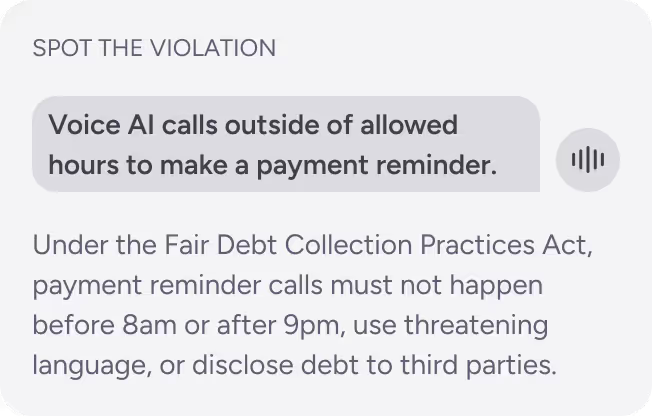

Payment reminders (outbound)

Deliver compliant reminders within allowed windows and without threatening language.

Insurance claims (inbound)

Capture claim information while ensuring proper consent and accurate documentation.

Key Risks in Finance

Voice AI systems in financial services face high exposure across security, privacy, and traceability – often under multiple regulations at once.

Security

Voice AI poses a high risk for impersonation, account takeover, and fraudulent transactions. Violations can trigger penalties up to $100,000/month (PCI DSS) and reputational damage.

Data privacy

Extensive regulations apply, with violations potentially leading to fines up to €20M (GDPR).

Auditability

Voice AI interactions and actions must be documented correctly, according to strict, regulated procedures.

How Voicelint Helps

Voicelint’s industry-specific verification procedures and expert analytics align with your need for rigor, traceability, and regulatory defensibility – across inbound and outbound call flows.

500+

Production systems verified

4

years of experience

50k+

Violations identified

Compliance Challenges

Financial voice AI failures often appear in edge cases – error states, opt-outs, identity flows, and outbound timing. We test these systematically and document fine exposure and remediation paths.

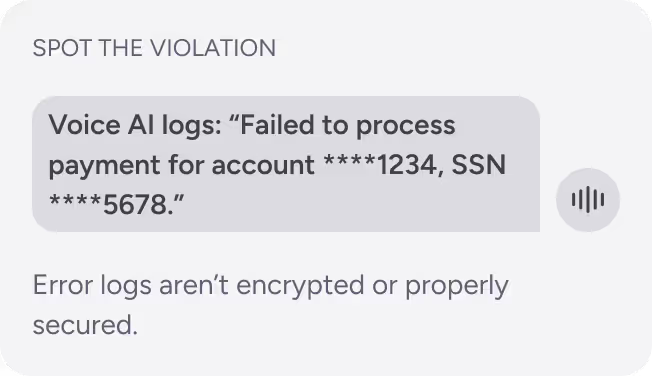

Challenge 1: Financial PII logged in error messages

Violation: Exposing sensitive financial PII in unsecured logs creates a data breach risk and violates PCI DSS and GDPR. Fine exposure: up to €20M.

Challenge 2: GLBA opt-out rights

Violation: Misusing opted-out data violates regulations. Customers have the right to opt out of all information sharing. This highlights complex nuances in what can and can't be done with opted-out data. Fine exposure: up to $100,000/year.

Challenge 3: Collection calls (FDCPA compliance)

Violation: Payment reminder does not comply with regulations. Fine exposure: $1,000/violation + potential class action.

Make Your Finance Voice AI Verifiably Safe

Join hundreds of organizations ensuring confidence in voice AI deployments with expert compliance validation.